What Is Data Automation?

A Practitioner's Guide to Data Automation

Most data teams aren't short on data. They're short on time. The average data engineer spends more than half their week on work that could, in theory, run itself: pulling from source systems, transforming raw records, refreshing pipelines, chasing failures at 2am. Data automation is what changes that equation.

This article breaks down what data automation actually is, how it works under the hood, and where it fits into a modern data stack. No fluff. Just the mechanics.

The Short Answer

Data automation, sometimes called automated data processing, is the process of moving, transforming, and delivering data without manual intervention. Instead of a data engineer writing a script, running it, checking the output, and kicking off the next step by hand, automation handles the full sequence: ingest, transform, load, test, alert.

The goal isn't to replace data engineers. It's to give them back the hours they're currently spending keeping the lights on.

Why Manual Data Work Breaks at Scale

Picture a mid-size company with 40 data sources, three BI tools, and a data team of six. Every new source means a new ingestion job. Every schema change in a source system breaks a downstream pipeline. Every business request ("can you add this filter?") creates a backlog that teams can't clear.

That's not a people problem. It's an architecture problem.

Manual pipelines don't scale. They require constant maintenance, degrade under change, and create single points of failure around whoever built them. When that person leaves, the institution loses context that's almost impossible to rebuild.

The consequences are real. A global optics manufacturer with 34,000 SKUs spent $50,000 across internal builds, consultants, and a specialist vendor attempting to ship a single marketing pipeline. It stalled for over a year. Without that pipeline, the Global Analytics Manager described the situation plainly: "We were flying without much vision or foresight as to where our dollars were best spent." Campaigns kept spending while the team waited for visibility they never got. Read the full story.

Data automation replaces fragile, hand-built pipelines with systems designed to handle change. The pipeline doesn't break when the source schema updates. The transformation logic doesn't live in a script only one person understands. The refresh doesn't require someone to manually trigger a job at 6am.

What Does Data Automation Include?

Data ingestion automation pulls data from source systems on a schedule or in response to events. Instead of writing a custom connector for every API, cloud storage bucket, or database, automated data integration handles the extraction reliably across hundreds of sources simultaneously.

Transformation automation applies business logic to raw data: cleaning, joining, aggregating, reshaping. For teams running modern data warehouses, data warehouse automation handles the logic layer automatically, so engineers define the rules once, and the system applies them consistently regardless of volume or frequency. This is the core of what ETL and ELT pipelines have always aimed to do, but without the manual overhead that traditional approaches require.

Orchestration coordinates everything else. It sequences jobs, manages dependencies, and decides what runs when. If ingestion from a source completes successfully, orchestration triggers the downstream transformation. If it fails, it alerts the right person and stops the chain before bad data propagates.

Testing and validation ensures data quality at each step. This matters more than most teams expect. Nature's Touch, a global frozen foods supplier managing 30 crops across six geographic locations, ran its supply chain data through ERP and MRP systems daily for years. Neither system flagged a persistent error in a pounds-to-kilograms conversion formula buried in a 72-page Excel model. The variance it created: $500,000 per year. Automated validation, applied at the right point in the pipeline, caught what enterprise platforms couldn't. Read the Nature's Touch story.

Monitoring and alerting closes the loop. When a pipeline fails, a volume threshold is missed, or latency spikes, the right person gets notified automatically. The pipeline becomes auditable rather than opaque. Schema drift, one of the most common causes of silent pipeline failure, becomes detectable and manageable rather than a recurring emergency.

Together, these layers form an automated data pipeline, a system where data flows from source to destination with minimal human touchpoints and maximum observability.

How Data Pipeline Automation Works

Walk through a practical example. Say you're a data engineer at a retail company and your job is to keep the weekly sales dashboard accurate.

Without automation, the sequence looks like this: log into the ERP, export yesterday's transactions, upload to the data warehouse, run the transformation script, check for errors, fix any schema issues manually, ping the BI team to refresh the dashboard. Every week. Same steps. Every week.

With data pipeline automation, the system handles all of it. A connector pulls transaction data on a schedule. A transformation job reshapes it to match the warehouse schema. An orchestrator confirms the transformation completed before triggering the dashboard refresh. A test suite validates row counts and checks for nulls after each step. If anything fails, you get the context you need to fix it.

You're not doing the work anymore. The system is. Your job shifts from running the pipeline to designing and improving it.

Nature's Touch saw this play out directly. A reconciliation process that previously took 48 hours of manual analysis now completes in 10 minutes.

Where AI Data Automation Changes the Picture

Traditional data automation still required someone to build and maintain the logic: write the transformation, configure the connector, define the tests. That work was still manual, even if the pipeline ran automatically once built.

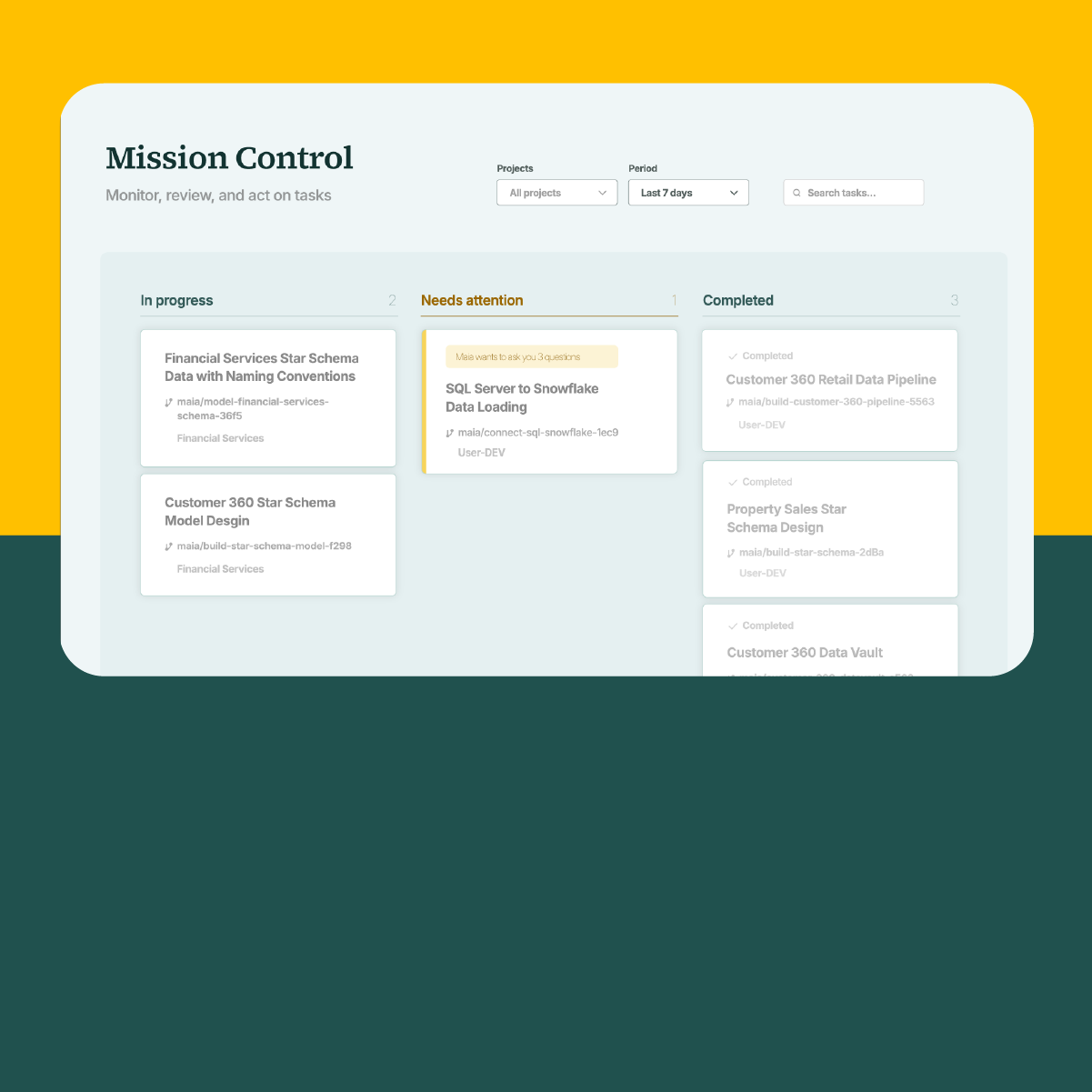

AI Data Automation goes further. It doesn't assist engineers one task at a time. It autonomously generates, tests, and maintains pipeline logic on their behalf, adapting as source systems change. Data teams describe what they need; the platform builds it. For a detailed breakdown of how this differs from traditional approaches, see our comparison of traditional automation vs AI Data Automation.

This is a shift from manual data operations to automated data production. The distinction matters. A copilot helps you write faster. An AI Data Automation platform changes what gets produced and who produces it.

St. James's Place (SJP), one of the UK's leading wealth management firms, tested this in a proof of concept across two use cases. Their first: sentiment analysis of thousands of client survey responses, a process that previously consumed around 4,000 hours per year. Maia, the AI Data Automation platform, completed the same workflow end to end in 16 hours, a 1,300% efficiency gain. Their second use case was ETL migration, where manual rewrites were consuming days per pipeline. With AI Data Automation, that effort dropped by roughly two thirds. Kelly Maggs, Director for Data Architecture Platform and Engineering at SJP, put it plainly: "The big productivity numbers you hear about AI can actually be real." Read the SJP story.

The impact extends to team structure too. The Head of Data at a leading optics manufacturer described what changed after adopting Maia: "We were going to hire five data engineers, but now we don't need to. All I need is me and one junior engineer to deliver our whole data strategy. The $500,000 savings in wages will cover my entire data stack and more. That's the immediate impact Maia has had on us." Read the full story.

Common Misconceptions

"Data automation replaces data engineers." It doesn't. It removes the low-value work: the repetitive ingestion jobs, the manual refresh cycles, the maintenance grind. What's left is the interesting work, strategic projects, product development, the initiatives that actually move the business. Teams that automate the basics don't shrink. They take on work they previously couldn't. For more on how AI is redefining the data engineering role, the shift is structural, not cosmetic.

"You need a massive stack to automate." Not anymore. Cloud-based platforms handle ingestion, transformation, orchestration, and testing without teams having to deploy and maintain infrastructure. A two-person data team can run a fully automated pipeline architecture today that would have required significantly larger headcount five years ago.

"Automation means less control." The opposite is often true. Automated pipelines come with observability built in. You see every run, every failure, every latency spike. Manual pipelines are opaque by comparison. When Nature's Touch ran manual reconciliations, errors compounded undetected for years. Automation made the pipeline auditable, and caught a half-million-dollar variance in the process.

Choosing Where to Start

If you're building toward a more automated data stack, start with the part of your pipeline that fails most often or takes the most manual time to maintain.

For most teams, that's ingestion. Source system schemas change constantly. Connectors break. API versions deprecate. Automating ingestion and automated data integration eliminates the most fragile part of the pipeline and gives you a stable foundation to build on. Maia connects to hundreds of source systems out of the box.

Once ingestion is stable, transformation is usually next. Codify your transformation logic. Move it out of ad hoc scripts and into a system with version control, testing, and documentation. That shift alone reduces the blast radius when something changes upstream.

Orchestration and monitoring follow naturally once you have ingestion and transformation under control. At that point, the pipeline can genuinely run itself, and your team's job becomes improving the system rather than operating it. If you're running legacy ETL tools and wondering whether modernization is worth the effort, this breakdown of the hidden costs of legacy ETL is a useful starting point.

The Real Shift

The teams that get data automation right don't spend weekends fixing broken pipelines. They spend that time building data products that move the business. But the bigger shift isn't operational. It's strategic. When data engineering becomes automated data production, smaller teams deliver enterprise-scale outcomes, and take on work that previously required much larger ones. That's not a marginal efficiency gain. It's a different model of what a data team can do.

The manual alternative has a cost too. It just shows up slowly, in backlogs and burnout and the constant feeling that the team is running to stand still.

Manual data entry automation typically refers to tools that replicate human input, form-filling, copy-paste, or basic record transfer between systems. Data automation operates at the pipeline level: it moves, transforms, and validates data at scale across connected systems, handling volume and complexity that no amount of entry automation could address.

Data warehouse automation refers to automating the logic layer of a data warehouse, the transformation, modeling, and loading steps that prepare raw data for analysis. Data warehouse automation tools typically handle this by generating SQL or transformation code that adapts as schemas change, rather than requiring engineers to write and maintain that logic by hand.

Data pipeline automation is the practice of running the full sequence of data movement steps, ingestion, transformation, loading, validation, without manual execution at each stage. Connectors pull from source systems on a schedule, transformation logic reshapes the data automatically, and orchestration manages job sequencing and dependencies. Once built, the pipeline runs without intervention.

Automated data processing refers to the mechanical act of moving and transforming data without human intervention. Data automation is the broader practice, it encompasses automated data processing but also includes orchestration, testing, monitoring, and the full pipeline lifecycle. Think of automated data processing as one layer within a complete data automation approach.

Book a Maia demo.

Related Resources