Traditional Automation vs. AI Data Automation

What's the Real Difference Between Traditional Automation and AI Data Automation?

For decades, data pipelines ran on scripts and schedules. Cron jobs. Hand-coded ETL. Rigid rules designed for a world where data was predictable and change was slow.

That world is gone.

As data volumes grow and source systems shift without warning, traditional automation is showing its limits. The question isn't whether to move beyond it. It's how far to go, and how fast.

TL;DR

Traditional automation runs on fixed scripts and schedules. It works in stable environments but breaks the moment something changes. AI Data Automation introduces adaptive, autonomous systems that monitor pipelines, detect issues before they escalate, self-heal, and optimize without needing a human in the loop for every decision.

How Does Agentic AI Differ from Traditional Automation?

Traditional Automation runs on fixed scripts and schedules — cron jobs, ETL pipelines, event triggers. It works in stable environments but breaks easily when schemas change or sources shift. Recovery is manual.

Agentic AI is goal-driven and adaptive. It monitors pipelines, detects anomalies and schema drift, self-heals, and optimizes — within your governance guardrails.

Automation follows instructions. Agentic AI understands the goal and keeps things running, even when things change.

Key Takeaways

- Traditional automation is rigid and reactive — it breaks when data changes and requires manual fixes

- Generative AI accelerates development through natural language inputs and AI-generated logic, but still depends on human direction

- Agentic AI introduces autonomy — self-monitoring, self-healing, and self-optimizing data pipelines that adapt without constant human intervention

- AI Data Automation delivers measurable business impact: fewer engineering bottlenecks, faster time-to-insight, and the capacity to scale without growing headcount

Traditional Automation: The Reliable but Rigid Workhorse

Many legacy data pipelines look something like this: a Python script triggered by a cron job, designed to move or transform data on a schedule. These systems work well — until something changes.

Manual data work isn't just slow. It compounds. Every fragile dependency is a future incident, and every incident pulls engineers away from the work that actually moves the business forward.

Limitations include:

- Brittle pipelines that break when schemas change

- Manual recovery required for errors, source changes, and tuning

- High maintenance overhead that scales with complexity, not with value

- Engineering time absorbed by tickets instead of builds

These pipelines don't learn. They don't adapt. They do exactly what they're told — no more, no less.

Generative AI: The Intelligent Assistant

Generative AI adds a more responsive layer of intelligence. Rather than coding every transformation by hand, developers can describe their intent in plain English and let AI generate the SQL, mappings, or full pipeline logic.

Capabilities include:

- Code generation from natural language prompts

- Pattern-based mappings between complex schemas

- Automated documentation and lineage tracking

- Faster delivery through AI-assisted tooling

This shifts data engineers from writing code line-by-line to reviewing, refining, and accelerating delivery. That's a real productivity gain. But generative AI is still a co-pilot — it needs a human hand on the wheel.

Agentic AI: The Autonomous Operator

Agentic AI goes further. These systems act with intent. They don't wait for triggers — they detect problems, decide on solutions, and act.

Agentic AI systems can:

- Monitor data pipelines continuously

- Detect schema drift or unexpected values

- Generate and test fixes automatically

- Learn from outcomes and improve over time

Imagine a pipeline that notices a source API has changed, updates the transformation logic, validates the new output, and deploys — all with minimal human intervention, within your governance guardrails.

This isn't automation in the traditional sense. It's autonomous data engineering.

Comparing the Three Approaches

Think of it this way:

Automation: "Do exactly what I tell you."

Agentic AI: "I understand the goal and I'll keep it running, even when things change."

With agentic AI, data engineers stop being platform users and start being platform managers.

Real-World Implications

Error handlingTraditional pipelines fail silently and wait for a pager alert. Agentic AI detects anomalies, diagnoses root causes, and resolves issues autonomously — reducing mean time to resolution and minimizing downstream impact.

Schema changesEven minor schema changes can break conventional pipelines and require human rework. Agentic AI proactively identifies drift, adapts the pipeline, and maintains continuity.

ScalingTraditional automation scales by adding engineers. Agentic AI scales by adding capacity. Rethinking how data engineering works in the AI era isn't optional anymore. It's the competitive gap.

When to Use What

Not every problem needs AI. Understanding where each approach fits helps you apply the right level of intelligence.

Use traditional automation when:

- Pipelines are stable and rarely change

- You need deterministic, static logic

Use generative AI when:

- You need to speed up development

- You want to translate business requirements into technical specs quickly

- You're supporting lean engineering teams or citizen data users

Use agentic AI when:

- You operate in fast-changing, dynamic environments

- You need pipelines to adapt on their own

- You want to reduce manual monitoring and firefighting

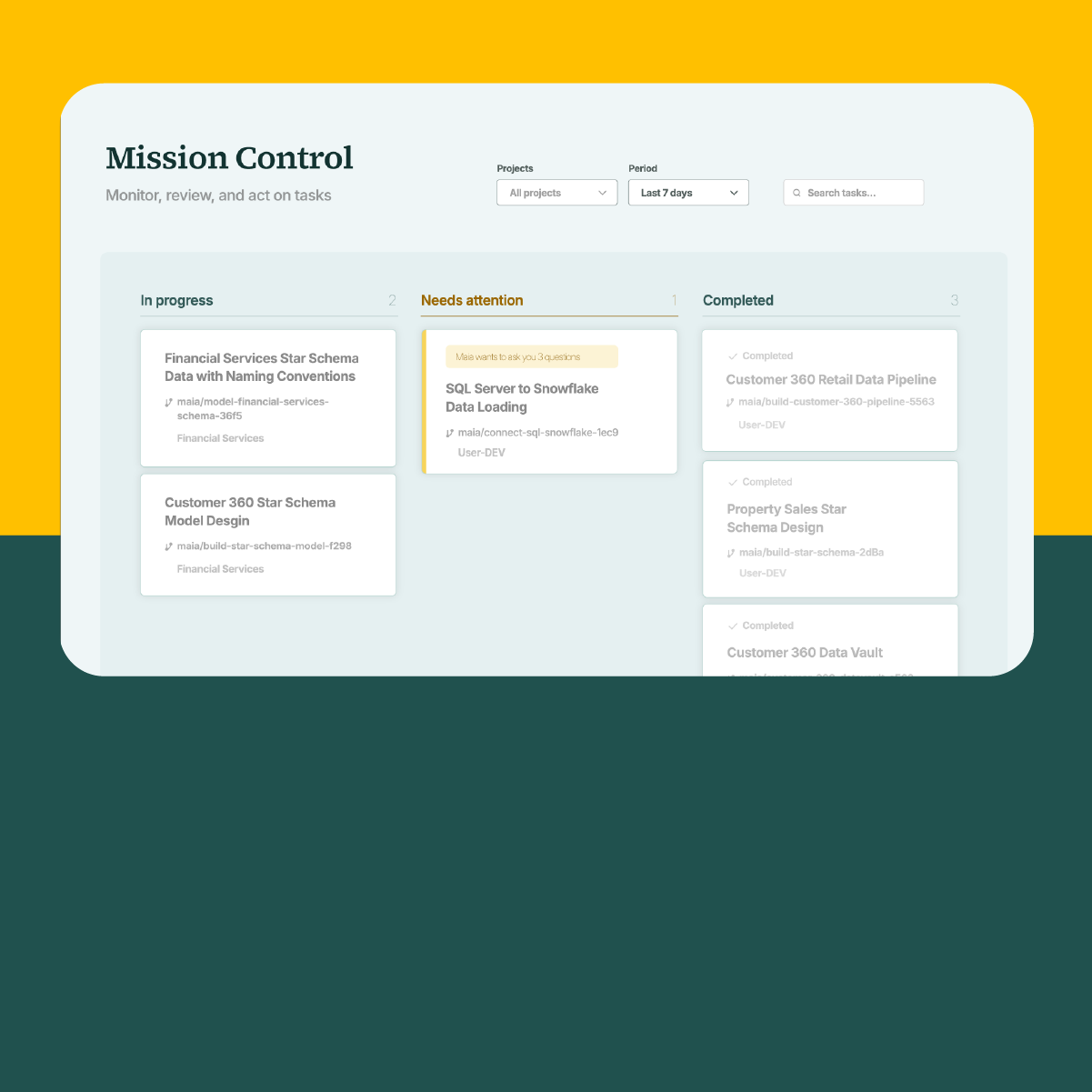

Meet Maia: AI Data Automation in Practice

Maia sits in a different category entirely. It's the first AI Data Automation platform — built not to assist engineers, but to act as a full agentic data team operating within your governance guardrails.

Where legacy tools hand you a better interface and call it AI, Maia acts as an agentic data team that plans, builds, monitors, and evolves data pipelines autonomously, within the governance structures your organization already has.

Maia is built on three components:

Maia Team — expert agents working 24/7 to plan, execute, and monitor data engineering tasks. Autonomous execution at machine speed, with human oversight built in.

Maia Context Engine — digital organizational intelligence that bridges the context gap, capturing institutional knowledge and governance standards to ensure every pipeline operates on trusted, consistent logic.

Maia Foundation — enterprise-grade infrastructure supporting 150+ pre-built connectors and multi-workload deployment across the data stack.

Together, they turn the case for agentic AI in this article into something you can actually run.

The Strategic Case for AI Data Automation

AI Data Automation delivers measurable benefits beyond traditional automation. Key advantages include:

- Business agility: Respond faster to shifting data sources and business requirements

- Resource efficiency: Cut manual maintenance and free up engineering time for higher-value work

- Faster time-to-value: Shorten development cycles and accelerate insight delivery

- Scalability: Handle greater data volumes without growing headcount

- Broader capacity: Non-technical users can take on data operations work, reducing the ticket queue and freeing engineers for higher-value builds

Manual Data Work Is a Choice

The cron job had a good run. So did the hand-coded ETL pipeline. But as data complexity grows, so does the hidden cost of keeping that infrastructure alive — the constant maintenance, the fragile dependencies, the engineers spending their weeks on tickets instead of building.

Organizations that move beyond scripts and schedules toward intelligent, human-in-the-loop agentic systems don't just move faster. They free their teams to do the work that actually matters.

The question for data leaders isn't whether to adopt AI Data Automation. It's how much longer manual data work stays a choice.

Enjoy the freedom to do more with Maia on your side.

Related Resources

.png)