Building Agentic Workflows on a Composable Data Architecture

Manual data work is the silent tax of the AI economy. Teams drown keeping the lights on. Backlogs grow. And every new AI initiative runs straight into the same wall: the pipelines, transformations, and data products that need to be built, fixed, and maintained by hand.

Agentic AI changes the equation. But agents can't operate on top of the static, monolithic platforms most enterprises are still running. To eliminate manual data work at scale, you need a foundation that's as modular and adaptive as the agents themselves.

That's where composable architecture comes in.

TL;DR

Agentic workflows use autonomous AI agents to plan, execute, and evolve data work without a human in every loop. They need a composable data architecture to thrive: modular, API-connected, and cloud-native, so agents can plug in, scale, and collaborate without breaking what's already there.

Key takeaways

- Agentic workflows replace manual data work with AI agents that make decisions, adapt, and coordinate autonomously

- Composable architecture is the enabler: modular, API-driven systems let agents operate without ripping out existing pipelines

- Multi-agent workflows outperform single, monolithic automation. Specialized agents working together cover more ground, faster

- Experimentation gets cheaper. You can test new agents inside isolated components instead of rewriting the stack

- Resilience is designed in. Agents reroute around failures and escalate to humans when confidence drops

This is the third post in the series on agentic AI and data engineering:

- What Is Agentic AI?

- How Agentic AI Is Redefining Data Engineering

- Building Agentic Workflows on a Composable Data Architecture (this one)

What agentic workflows actually are

At their core, agentic workflows are data pipelines run by autonomous, goal-driven agents. Agents that can decide, act, and coordinate with other agents or systems without waiting for instructions at every step.

Traditional workflows are rule-based and static. Agentic AI is different:

- Dynamic decision-making. Agents respond to real-time data and shifting objectives, not hard-coded logic

- Specialized collaboration. Different agents handle different jobs. Extraction, cleaning, transformation, model training

- Continuous alignment. Workflows stay calibrated to business context and organizational standards as they run

- Autonomous problem-solving. When something breaks or changes, the system adapts

But agents can't function in isolation. They need an architectural foundation that's just as modular and responsive as they are.

Composable data architecture: the foundation agents need

Composable data architecture replaces rigid, all-in-one platforms with modular, interoperable components. Ingestion, transformation, storage, analytics. Each piece is built to plug and play, not to lock you in. It's the direct response to a problem most enterprises are already feeling: analytics-era data architecture wasn't built for AI workloads.

Multi-agent workflows thrive in this kind of environment. Each component can evolve independently, while still integrating cleanly with everything else.

Three principles make it work:

Decoupling. Components evolve independently without breaking the pipeline. Upgrade your transformation layer without touching storage or analytics.

API connectivity. Systems talk to each other through defined interfaces. That's what enables real-time data exchange and orchestration across the stack.

Cloud-native flexibility. Infrastructure scales elastically with workloads and agents, so variable demand doesn't require manual intervention.

Put those together and you get a foundation agents can actually operate on.

Why composability enables agentic AI

Here's what modular architecture gives you when agents enter the picture:

1. Agents plug into existing workflows

Composable platforms expose functionality through APIs, which means agents can be added, swapped, or retired without disrupting the pipeline. Need an agent to handle real-time data quality checks? Plug it in where it fits.

2. Orchestration across modular systems

Agents often trigger work across systems, warehouses, BI tools, transformation engines. Multi-agent workflows orchestrate smoothly through event-based triggers and shared metadata, so coordination doesn't depend on brittle point-to-point integrations.

3. Low-risk experimentation

Modularity makes experimentation cheap. Test new agents, an LLM-based classifier, a new optimization agent, inside isolated components of the stack. No rewrites required.

4. Resilient pipelines

When one component fails, agents reroute tasks through alternative pathways. Pipelines stay up. Humans get alerted when something needs attention.

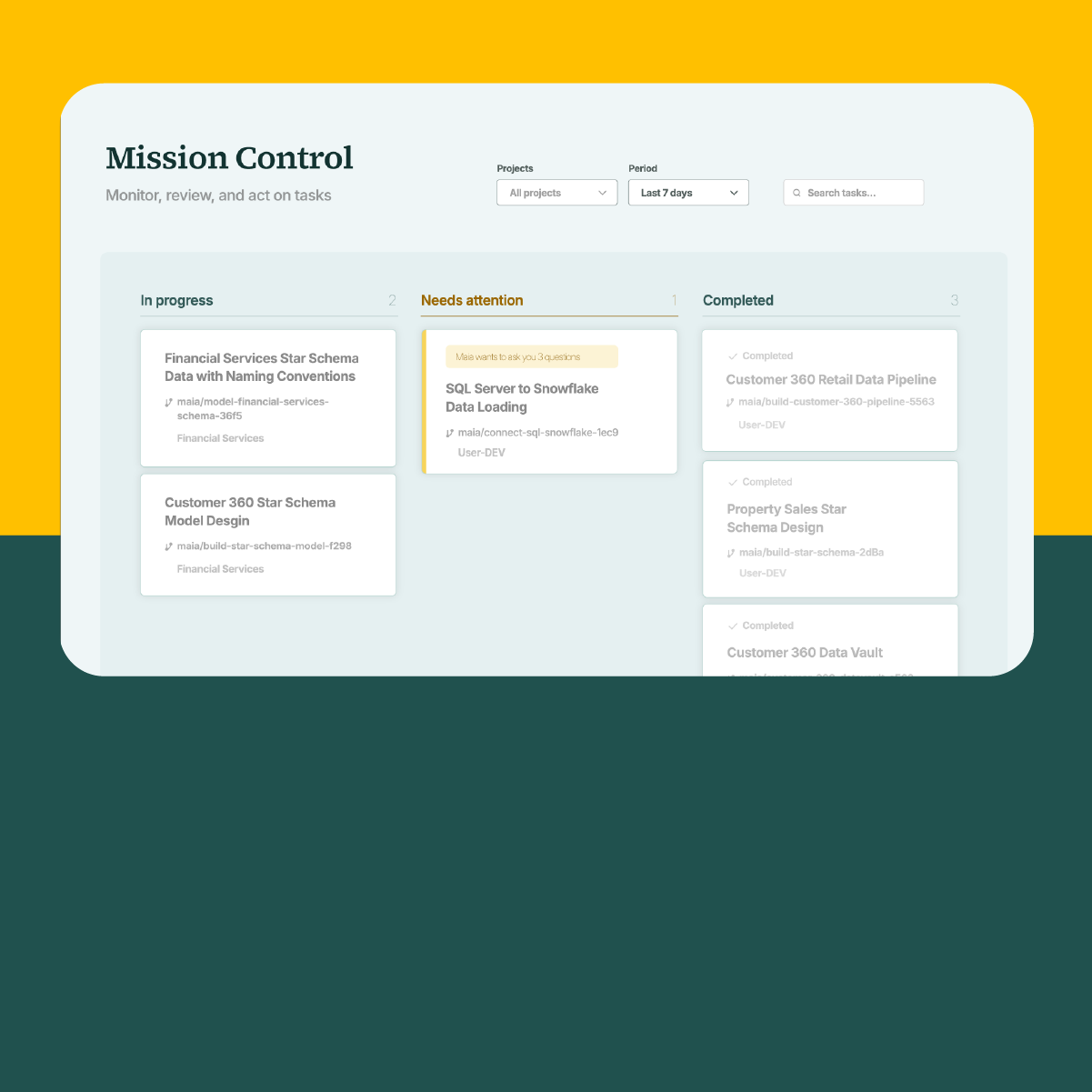

Maia: AI Data Automation, built for composability

Maia is the industry's first AI Data Automation (ADA) platform. It uses AI to eliminate manual data work, autonomously creating, managing, and evolving data products that serve both human users and AI agents at enterprise scale.

Maia is built on three tightly integrated components:

- Maia Team is an always-on workforce of AI agents that handles operational data work: building, modifying, optimizing, and maintaining pipelines and data products

- Maia Context Engine is the intelligence layer that keeps automation aligned with your enterprise reality. It captures business rules, architecture standards, governance requirements, and institutional knowledge

- Maia Foundation is the secure, governed, cloud-native infrastructure where autonomous execution happens. It's what makes AI Data Automation viable in real enterprise environments

Maia Foundation is composable by design. API-driven, built to integrate with the tools you already run, and natively supporting Snowflake, Databricks, and Amazon Redshift through a pushdown architecture that generates SQL optimized for each platform. It supports real-time decision-making through event-driven architecture and ships with 130+ pre-built connectors.

Maia also supports Agent-to-Agent (A2A) orchestration via the MCP protocol, so Maia agents can coordinate with other agents across your stack, not just the pipelines you own.

The result: customers reduce manual data work by more than 90%, move delivery from weeks to hours, and scale output without adding headcount. That's the productivity multiplier of AI Data Automation: 10–40x compared to human-scale work.

A real-world example: multi-agent pipelines in e-commerce

Picture a modern e-commerce data pipeline run by three specialized agents:

Agent 1: Intelligent ingestion

- Monitors multiple sources, web analytics, CRM, inventory

- Adapts ingestion frequency based on business events like flash sales or seasonal peaks

- Handles schema changes automatically, without manual intervention

Agent 2: Dynamic transformation

- Applies business rules that evolve with the data

- Detects and corrects data quality issues in real time

- Optimizes transformation logic as volumes shift

Agent 3: ML operations

- Monitors model performance and triggers retraining when accuracy drops

- Manages A/B tests for new algorithms against production models

- Deploys winning models after validation

Each agent operates independently inside the composable architecture, but coordinates through shared APIs and event streams. When the ingestion agent spots a data quality issue, it alerts the transformation agent. Transformation applies corrections. The ML agent pauses updates until the data is clean again.

No human touches any of it. Until something needs a human decision, at which point the system says so.

For a real-world version of this, see how St James's Place automated their data operations with Maia.

Architectural principles for agentic success

Agentic workflows don't work on any architecture. They need a few things to be true:

Event-driven communication. Agents communicate through events, not direct calls. That's what gives you loose coupling and fault tolerance. When one agent finishes a task, it publishes an event. Other agents react.

Stateless agent design. Agents keep minimal state and rely on shared data stores for persistence. That makes them easy to scale, replace, or recover without losing context.

Observability by design. Every action gets logged and monitored. You need full visibility into what autonomous agents are deciding and why, both for debugging and for continuous improvement.

Human-in-the-loop integration. Agents operate autonomously, but escalate to humans when confidence drops below defined thresholds. Autonomy with a safety net, not autonomy without oversight.

Where this is heading

A few things are shaping the next wave of agentic data engineering:

- Richer multi-agent collaboration. Some agents will act as orchestrators, managing teams of specialized agents rather than doing the work themselves

- Deeper cloud integration. Agents will provision resources, manage costs, and optimize performance across cloud providers automatically

- Smarter learning. Next-generation workflows will adapt not just to data patterns but to organizational preferences and business context

The freedom to do more

Composable architecture isn't a trend. It's the shift that makes scalable agentic workflows possible in the first place.

As AI adoption accelerates, composability separates the flexible from the fragile. The organizations that get this right will adapt faster, ship more, and stop treating data engineering as the bottleneck between ambition and delivery.

That's the freedom Maia is built to deliver. Freedom to say yes to more AI initiatives. Freedom to focus on innovation instead of maintenance. Freedom from backlog, hiring constraints, and pipelines held together with string. Freedom to lead the business, not just keep the data running.

Enjoy the freedom to do more with Maia on your side.

Related Resources

.png)